In an increasingly competitive market for cloud computing, reliability matters, and Microsoft has some work to do.

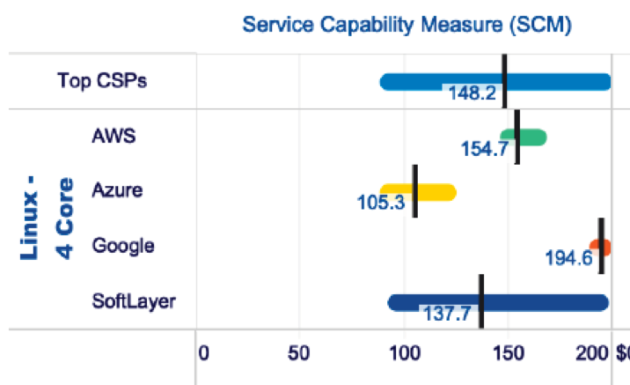

Data compiled by Gartner and Krystallize Technologies shows a noticeable gap between Microsoft Azure and the other two big cloud providers when looking at cloud uptime in North America during 2018. According to Gartner, last year Amazon Web Services and Google had nearly identical uptime statistics for the virtual machines at the heart of cloud services — 99.9987 percent and 99.9982 percent, respectively — while Azure trailed by a small but significant amount, at 99.9792 percent.

“Azure has had significant downtime, not just in 2018, but even the first three months of 2019 have been not good for Microsoft,” said Raj Bala, an analyst with Gartner who compiled the data.

As Microsoft courts developers this week at Build with an array of new services, it has also making been making changes behind the scenes to improve Azure reliability, said Mark Russinovich, Microsoft Azure CTO, in an interview this week with GeekWire. He plans to showcase a few of those improvements during his annual Azure architecture keynote on Wednesday, but also defended the company’s track record when dealing with planned and unplanned disruptions to cloud service.

“We’ve invested a ton in capabilities that allow us to do maintenance with little to zero impact on customers,” Russinovich said.

However, that didn’t help last week when a routine DNS migration went haywire, disconnecting Azure services from customers and causing a major outage that lasted several hours and took out essential Microsoft services like Office 365 and Xbox Live, as well as websites such as the one you’re currently visiting.

According to a root-cause analysis released by Microsoft earlier this week, that problem was caused by two separate errors, and had either one of those errors happened by itself, we’re not having this discussion. As a result, Microsoft is putting additional procedures and safeguards into place in hopes of preventing this from happening again in the future, Russinovich said.

“When you do thousands of these and everything goes off fine, you’re like, the process works,” he said. “Obviously something like this shows us that there’s a gap, and we’re closing that gap.”

There were two major unplanned events that rocked Microsoft’s cloud services in North America during 2018.

The discovery of the Meltdown and Spectre chip bugs in 2017 forced all cloud providers to update their services in January 2018 with software mitigations that isolated cloud customers from those bugs, but Microsoft had to reboot everyone’s servers to put those changes into effect, and that takes time. And in September 2018, a lightning strike at a data center in its South Central U.S. region caused some cooling systems to fail, damaging servers and knocking out some services for more than 24 hours as engineers worked to preserve customer data and replace the damaged systems.

In the months following the Spectre reboot cycle, Microsoft began rolling out new live migration capabilities that allow it to update servers running customer workloads with little to no disruption. Earlier this year it began rolling those features out across its network of data centers, and they’re now operating nearly everywhere, Russinovich said.

But AWS and Google also needed to update their servers to add the patches for Spectre and Meltdown, and it didn’t appear to have as much of an impact on their service uptime. Google likes to tout its live migration capabilities that can update servers with no disruption to customer workloads, while AWS talks far less about the technologies it uses to run its cloud service, which is very on brand for the market-share leader.

Microsoft is also using machine-learning technology to do predictive analytics on its data center hardware, Russinovich said, in hopes of flagging components that are about to fail or underperform based on historical performance data.

On Wednesday Russinovich plans to show off Project Tardigrade, a new Azure service named after the nearly indestructible microscopic animals also known as water bears. This effort will detect hardware failures or memory leaks that can lead to operating system crashes just before they occur and freeze virtual machines for a few seconds so the workloads can be moved to a fresh server.

The company is also continuing to roll out availability zones in its cloud computing regions around the world. Microsoft cloud executives rarely miss an opportunity to point out that they have the most regions around the world of any cloud provider, but only within the last year has Microsoft started building availability zones — separate facilities within a region with independent power and cooling supplies — that help ensure availability in the event of a problem at one building in a region.

Microsoft launched its first availability zones in March 2018 in its Iowa and Paris data centers, and has since rolled them out to several other regions in the U.S., Europe, and Asia. Cloud providers refer to regions and zones a little differently, but AWS and Google Cloud have had far more availability zones up and running for several years.

Operating cloud computing services at scale is really one of the more amazing things human beings have accomplished; the complexity involved is hard to appreciate without a fair amount of knowledge about how these systems work. And even if Microsoft lags AWS and Google in reliability scoring, unless your company is blessed with world-class operations talent, Microsoft is likely still better at operating data centers than most companies managing their own servers.

But turning over control of your most critical business applications to a third-party provider still requires a leap of faith. As cloud companies fight tooth and nail for the next generation of large enterprise customers considering a move to the cloud, uptime numbers will be more and more important.